A Line for Thinking

The question concerning the em dash—or, writing that worries.

1 :: Once I start writing, it doesn’t take me long to get to an em dash. For better or worse, the mark comes naturally as I compose sentences that, I suppose, are more complicated—or at least longer—than most, and which are prone to revel a little too much in a frolic architecture of sound, syntax, and allusion that may give uncommitted readers pause, if not drive them away entirely. The versatility of the em dash—it can set off an aside; introduce immediate elaboration of an idea (as opposed to the way a parenthesis can cushion the blow of its delivery); it can propel the reader through a sudden door in a sentence (in contrast to a colon, which announces said door and holds it open, politely pointing the way); it can signal a change in direction of attention for author or audience, the way a jump-cut severs cinematic continuity; it can unlock unexpected energy in unfolding prosody, just as, to stretch the point beyond its comfortable scale, Stravinsky’s incisive rhythms startle melody; it can, in short, inject inspiration as it occurs, without waiting for the end of a sentence to allow a fresh start—belies the simplicity of the shape that embodies it: a line that does not connect two points so much as inscribe a frisson of surprise or an expanse of possibility between them.

It is meant to disrupt—gently or violently, sometimes playfully—an expected pattern, to reset the frame of expression or focus. As such, it is ironic that it has been widely regarded as a sign of AI composition, a mark of ChatGPT generated prose, even though such prose is summoned via gargantuan feats of pattern-identifying calculation. “All sorts of people seemed mystifyingly confident,” wrote Nitsuh Abebe in a column commenting on the phenomenon in the New York Times, “that no flesh-and-bone human had any use for this punctuation, and that any deviant who did would henceforth be mistaken for a computer.” Embracing his own deviancy in this regard, a deviancy I happily share, Abebe references the punctuation’s storied pedigree: “The dash is a time-honored and exceedingly normal tool for constructing sentences!” he exclaims.

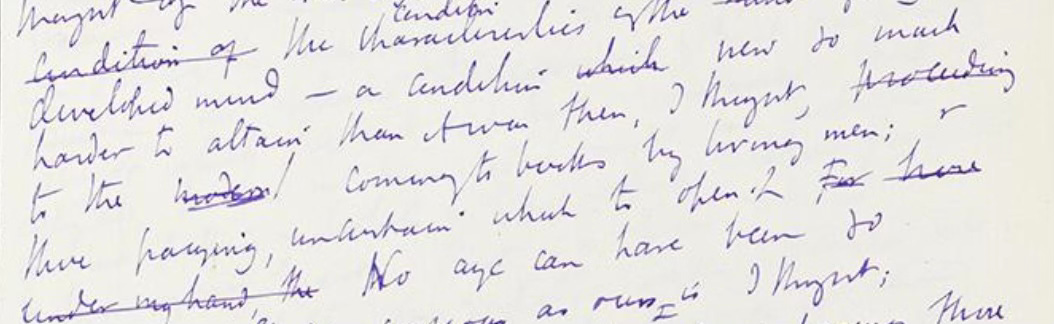

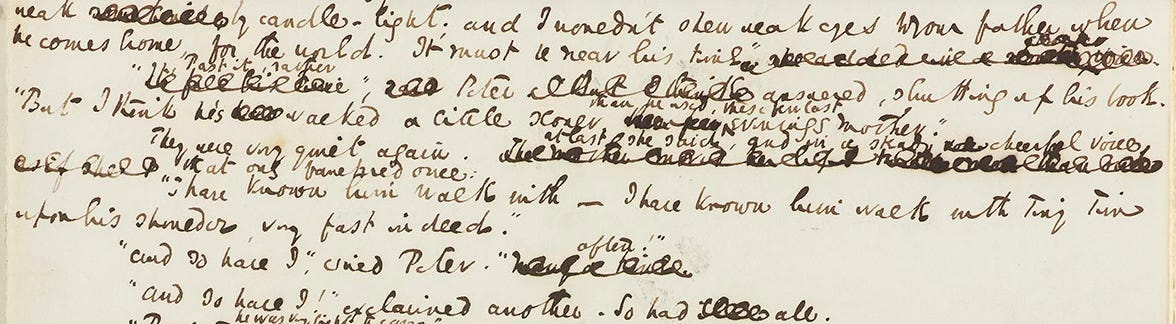

He’s right: one doesn’t need to browse far through English literature to discover that the mark had established provenance long before its adoption by the machine. Jane Austen used it liberally in dialogue, and was fond of combining it with a prefatory semicolon, as the first chapter of Sense and Sensibility (1811) repeatedly bears witness: “He was neither so unjust, nor so ungrateful, as to leave his estate from his nephew;—but he left it to him on such terms as destroyed half the value of the bequest”.1 It’s there in the opening of Dickens’s Bleak House (1853), third sentence: “Smoke lowering down from chimney-pots, making a soft black drizzle, with flakes of soot in it as big as full-grown snowflakes—gone into mourning, one might imagine, for the death of the sun.”

We come upon it even sooner at the beginning of Henry James’s The Portrait of a Lady (1881):

Under certain circumstances there are few hours in life more agreeable than the hour dedicated to the ceremony known as afternoon tea. There are circumstances in which, whether you partake of the tea or not—some people of course never do,—the situation is in itself delightful.

Then again at the beginning of James’s second paragraph: “It stood upon a low hill, above the river—the river being the Thames at some forty miles from London.”

Leaving the nineteenth century for the twentieth—without, it should be remarked, stopping to glean evidence from the poetry of Emily Dickinson, whose verses are as drunk with dashes as they are lit with her mind’s forked lightning, and which deserve to be pondered as their own singularity—we find, at the start of Mrs. Dalloway (1925), the em dash surviving the fractures of modernism in fine fettle:

Mrs. Dalloway said she would buy the flowers herself.

For Lucy had her work cut out for her. The doors would be taken off their hinges; Rumpelmayer’s men were coming. And then, thought Clarissa Dalloway, what a morning—fresh as if issued to children on a beach.

One needn’t focus on the classics to find evidence of the em dash’s pedigree. The idea that its use was uncommon before ChatGPT is undercut by our national copy chief, Benjamin Dreyer, who, in his 2019 book, Dreyer’s English: An Utterly Correct Guide to Clarity and Style, addressed general profligacy in this regard directly: “Likely you don’t need much advice from me on how to use em dashes, because you all seem to use an awful lot of them.” Similarly, Bryan Garner, in the fifth edition of his Modern English Usage, published in November 2022, the same month that the ChatGPT tide of generative prose began to crash upon the shore of popular imagination, and so based on observation of usage trends preceding the tsunami that soon followed, stated:

The em-dash [sic] was once the most underused punctuation mark in American writing. But during the early 21st century, it reached a sort of cult status, with memes on social media about its use. There is also a growing sentiment that em-dashes should have their own designated key on keyboards.

That keyboard piece is important. A dash is a natural mark for a hand to make,2 a simple line that bridges a gap between thought and word, one thought and another, one direction and a turning, one moment and the next while mind and hand traverse the blank page, sometimes the former leading the way, often enough the latter. A keyboard interrupts the gestural ease with which writers reach for an em dash, breaking its flow in search of a shift key or a command+ formula that remains unintuitive even after repetition. Which is why, perhaps, as one detractor quoted by Abebe insists, “Nobody uses the em dash in their emails or text messages. This punctuation is irrelevant to everyday use cases.”

As Abebe suggests, there is a difference between such strictly digital “use cases” and other types of writing, reflecting a “shift in our perception of what writing even is.” If one views writing through the lens of current technology (which ensures a very sharp focus on “use cases”), one is more likely—being unbound from older technologies (paragraph, page, book, printing) that have shaped our vision of composition and its contents for the better part of a millennium—to conflate the production of text in today’s technological realm, in which it is often a record of speech-like phrases (if not oral utterance itself) rendered in letters on a screen, with the pursuit of exposition or eloquence, through a process that is paradoxically both more deliberate and more emergent than the trail of words our devices direct into the cloud and call back to earth—on another device—again.

It’s no special insight to see great gaps of time, taste, and temper between the literary prose I sampled above and the irruptions of digital text our devices distribute across thin air, but the conversation around the em dash and ChatGPT ignores the category confusion that comes from talking about “writing” as a unitary activity—as if the ways and means of a text message were no different from those of a personal essay, the animating impulse of a marketing email the same as that for a legal brief, the fabrication of fiction indistinguishable in manner and method from the drafting of a cover letter or a contract, a news story or a research paper; as if different kinds of composition do not have functions and purposes with their own demands that may have meaning and relevance beyond the AI-centered context with which the conversation often begins and ends. In large degree, that’s the larger point I’m after: while the technology itself can be startlingly proficient at mimicking the traits and tenor of most types of composition, the anxious discourse about how we write in the context of AI, unleashed by the advent of ChatGPT and other generative platforms that followed in its wake, has treated writing the way LLMs treat words: as one token after another, rather than as an unfolding of meaning as it is being made. The fact that most writing we do matches up with this tokenized conception of how words work explains the technology’s evident utility but casts a baleful shadow on our understanding of expression itself, to say nothing of the avenues of human flourishing expression enables as it parses experience.

Something of the same failure of both evidence and imagination—the insistence on treating a multifarious creature as a single-minded entity—pertains to most discussions of artificial intelligence in its own right, as Arvind Narayanan and Sayash Kapoor explain at the beginning of their cheekily named but thoughtfully argued book, AI Snake Oil (about, in the words of its subtitle, what artificial intelligence can do, what it can’t, and how to tell the difference):

Imagine an alternate universe in which people don’t have words for different forms of transportation—only the collective noun “vehicle.” They use that word to refer to cars, buses, bikes, spacecraft, and all other ways of getting from place A to place B. Conversations in this world are confusing. There are furious debates about whether or not vehicles are environmentally friendly, even though no one realizes that one side of the debate is talking about bikes and the other side is talking about trucks.

As the authors elaborate, considerations of fuel and function, speed and distance, capacity and utility are blurred by the broad use of a single term. “Now,” the authors continue, “replace the word ‘vehicle’ with ‘artificial intelligence,’ and we have a pretty good description of the world we live in.” Reliance on a blanket term to encompass many different applications, developments, and potentials blinkers our perception of what we’re talking about when we talk about AI; as it does when we speak about “writing in the age of AI,” as if writing is a single thing rather than, like the world according to Louis MacNeice, incorrigibly plural.3 That we feel compelled to do so is an artifact of our habituated consideration of technology, which has, for decades, been framed by technologists and their attendant commentariat as a reaction to what they’ve productized; in the case of writing, it’s as if—despite the fact that LLMs conjure their magic not from computation alone but from the resource-rich strata of human knowledge and creativity the systems plunder—what all the legible treasure they mine with probabilistic math was for before it became training data can now be confidently defenestrated along with countless babies, birthed or as yet unborn, engendered by human ingenuity out of necessity, invention, ingenuity, fear, faith, love, or play.

The hype around AI comes with implicit instructions, supplied by the technology’s biggest champions, that one must follow to have a credible voice in any discussion of the technology: “What we’ve built is amazing—just look what it can do. Now prove to us why anything you’ve ever valued before is still relevant.” Well, what’s been built is indeed amazing, replete with palpable benefits in fields such as medical research, scientific inquiry, coding, sociological analysis, industrial efficiency, financial optimization, and so on; more importantly in the long run may be as yet unfathomable innovations across all domains and disciplines, even education and the creative arts, if we can summon the resourcefulness to see past the piratical imperatives and often mind-numbing blandness of the outputs generated by current productization tracks, which lock the technology into interfaces—chat, for most folks—that are formally limiting and substantively stultifying, at least as far as writing is concerned. Given the economic exigencies that ultimately constrain the adventurousness of venture capital, these tracks, with the lack of profit-restricting guardrails like validating mechanisms, sycophancy blockers, or slop prophylaxes, may well become permanent enough to enshittify the platforms’ procrustean beds.4

But railing against such dangers—as much a byproduct of user experience decisions and go-to-market strategies as qualities inherent in the technology itself—ignores a salient factor about contemporary “writing” that we are slow to admit: even before its turbocharging by AI, slop already filled the content streams feeding so much of what we call the “creative economy.” For social media posts and press releases, marketing campaigns dressed up as “content” and clickbait disguised as “news,” endorsements in the service of personal branding and think pieces generated to garner engagement rather than thought—indeed, for nearly all manner of internet copy—the audience for years now has not been readers but the A/B test, blind to any meaning beyond the tally that salutes its power. A rather dull knife that adherents are quick to mistake for Occam’s razor—a mistake with considerable consequences for the rest of us, since those adherents determine so much of what surfaces in our screen-based life—the A/B test has devolved into the reductio ad absurdum of a model of the world plotted in binary coordinates in order to redraw reality in virtual spaces. AI slop is on the one hand the natural outgrowth of a system that demands—and rewards—quantity over quality, speed and regularity over originality, provocation over deliberation, and, on the other, its ideal product: a bottomless well of supply.

The stripping of the subjective investment of human effort from writing predates the mechanic mills of generative AI. In The Shallows: What the Internet Is Doing to Our Brains (2010), the book which grew out of his prescient 2008 Atlantic article, “Is Google Making Us Stupid?,” Nicholas Carr discusses Google’s relentless testing of elements of their customer-facing design, referencing a remark by Marissa Mayer, at the time the company’s vice president of search products and user experience:

In one famous trial, the company tested forty-one different shades of blue on its toolbar to see which shade drew the most clicks from visitors. It carries out similarly rigorous experiments on the text it puts on its pages. “You have to try and make words less human and more a piece of the machinery,” explains Mayer.

What Mayer was chasing LLMs have caught: they language part of the machinery in previously unimagined ways, teaching words to do math, turning them into numbers that can then be graphed to reveal patterns reflected in the company they keep, 1s and 0s ever spinning in an arcane choreography of calculation to produce by inscrutable means scrutable output—which, built on immense training sets of human composition, resembles that composition in form if not feeling, with syntactical and semantic coherence generated with probabilistic assurance of plausibility—math upon math upon math!—making it a passable substitute for much of the written content summoned into existence by contemporary commerce, education, and culture, especially that which has a predetermined or well-defined function, or fits its meaning into templated forms.5

The fact is that much text typed today in more than bursts of immediate purpose—from high school English themes to corporate reports, social media posts to formulaic fiction in genres that privilege predictability—might be well served by the speed, focus, and unfailing, unfeeling attention of ChatGPT and its ilk, if what’s to be valued is the product of the exercise rather than what can be gleaned by the writer in the process of producing it, or the reader in the experience of reading it. A school essay should be a blank page that, on its way to completion, is fitfully filled with energies of agency, anxiety, and articulation, but few teachers can devote the time to address such slippery inputs, or are allowed to account for them in their grading rubrics, while few students are equipped by their education to credit composition in this way—nor are they encouraged, much less applauded, if they do. Opinion pieces by professional writers across the digital landscape—with their headlines and taglines, framing of argument and consequent structure, even the arguments themselves (along with the always on-call supply of sociological, psychological, and economic studies tapped in support), drawn from a predetermined and unexamined inventory of topics and postures, to say nothing of hot takes bouncing off the rapidly cooling surface of other takes—often seem like exercises in a game of charades in which everyone has the answer in full view from the start, but is determined nonetheless to go through the attention-getting pantomime.

In so much of the muchness that we read all day long on our small and large screens, writing has become a form of packaging that is impervious to infiltration by the liberating if demanding uncertainties it should encourage. That the machine can mimic so many varieties of this fungible armature is amazing at first blush, admittedly wondrous to behold as one watches prose scamper across one’s screen; that the quality is often only “good enough” is a disappointment offset for many by the realities of supply and demand: “good enough” is all most of the writing being mimicked needs to be. We’ve dug a hole for ourselves that slop can fill, and now we have machines to fill it frictionlessly. Having made so much of the world machine-readable, technology seems poised to make it machine-writable as well.

2 :: What does all this have to do with the em dash? The bother around its use, and its consequent disavowal by writers not wanting to have their work branded machine-made, is a telling example of the manner in which AI sucks all conversation into the room it occupies, with the same voracious appetite by which it hoovers up any data that is not tied down (and much that, legally speaking, thought itself secure). Let’s consult Benjamin Dreyer again, for he reads the metaphoric room with an aptly gimlet eye:

It irks me no end, I must note, that well-intentioned and presumably honest students (and others) should have to spend an iota of an atom of a second second-guessing their writing for fear that someone who might as well be brandishing a divining rod or a planchette is going to indict them for cheating over the wielding of a piece of punctuation so utterly commonplace …

So commonplace and yet—in the current of inchoate composition—so versatile, as I said at the start. If AI’s use of em dashes is unexceptionable, appropriate grammatically and not worthy of much notice (as far as I can tell: quoting one, or ten, or a hundred examples seems superfluous when the store of them is infinite and malleable), the deployments seem cosmetic rather than dynamic, if only because the sentences they decorate carry in their forward motion no anxiety of expression: the next token is assuredly coming without hesitation or regret.

An em dash in the worked—even worried (in the dog-and-bone sense)—writing of Dickens, James, Woolf, and countless lesser mortals is an affordance of apprehension, a gateway in what might otherwise be a dead end, or an unforeseen turning into a corridor that leads from one room into another; it enables a writer to inquire into, rather than predict, what might be coming next.

The word for em dash in German is Gedankenstrich—“literally translated,” Andrea Wulf tells us following an em dash of her own, “as ‘a line for thinking,’ a pause to breathe and think.”6 Which makes it almost comic that this form of punctuation has become a flash point in the AI detection contretemps befuddling writers, teachers, and students—it’s as if the machine is trolling its critics more subtly than those critics, possessed by a faith in close reading, can credit. “A pause to breathe and think” co-opted by a mechanical process that, if we are speaking carefully, does neither, is an image that might hold pride of place in the fun house mirror of our contemporary reflections on technology.

At its core, language—in the words of Wolfram Eilenberger’s exegesis of the faith of Walter Benjamin—is not merely “a means for conveying valuable information to others but a medium in which we become aware of ourselves and all the things that surround us.” At its core, and in the right hands: which is to say that an em dash in worked and worried prose is a sign of the writer’s presence, an assertion that the meaning in the making is subject to her touch, that the time a sentence carries can be marked not in an eternal flipping of virtual switches but in her breaths and attentions, her hesitations and regard. What the em dash does is add texture—call it flux, friction, fracture—to the flow of a sentence, an almost tactile handling of the line of thought. Its use—we have ascended to the aspirational plane—exhibits a feel not only for language, but for experience, a tact for handling the world as it is fondled, caressed, considered, pondered by consciousness, at least upon the page.

One might argue, as William James did, and illustrated with italic emphasis his own, that our powers of attention, however strong, always carry within their workings dashes of their own: “a little introspective observation will show anyone that voluntary attention cannot be continuously sustained—that it comes in beats.” Further introspection might reveal to any writer what it suggests to this one: that those beats, in their persistent intermittency, are at the heart of human expression, a pulse between mind and word that is a vital sign of meaning that hasn’t yet been made. Are em dashes relics of that venerable if often foolish pursuit, a gesture of embodied thinking that will—in technology’s stripping of the altars of all that’s not recursive—be rendered obsolete?

3 :: In 1915, near the end of his life and just two years after Niels Bohr had applied quantum insights to propose a new model of the atom, upending contemporary scientific understanding and resetting it on a mathematical footing, the renowned British chemist and physicist William Crookes, who had himself made earlier elemental discoveries into the atomic structure of the physical world, wrote that chemistry would thereafter “be established upon an entirely new basis. . . . We shall be set free from the need for experiment, knowing a priori what the result of each and every experiment must be.” Reading Crookes’s pronouncement some three decades after it was penned, the young Oliver Sacks, as he neared the end of the “chemical boyhood” he so engagingly describes in his memoir Uncle Tungsten, was filled with foreboding:

Did this mean that chemists of the future (if they existed) would never actually need to handle a chemical; might never see the colors of vanadium salts, never smell a hydrogen selenide, never admire the form of a crystal; might live in a colorless, scentless mathematical world? This, for me, seemed an awful prospect, for I, at least, needed to smell and touch and feel, to place myself, my senses, in the middle of the perceptual world.

As I type prompts into ChatGPT, Claude, and other AI interfaces with bemused apprehension, casting my supplications into its reservoirs of data superstitiously, hoping to ward off by such petitions the worst worries that the inscrutable power of machine learning evokes, I sense something akin to the presentiment of Sacks’s chemical anxiety. The facile self-assuredness with which the letters come scurrying across the screen gives me pause, even as the words chase after one another with only slight hiccups in their progress. Before I even assess the content it conveys, the prose proffered seems too easily won, the phrases that compose it lacking the weight and texture one comes to judge through handling words, assaying their combinations of sound and sense, the way the youthful Sacks handled his chemicals. It’s the lack of this tactile sensation, this palpability, that seems to me an awful prospect in the automatic summoning of sentences, rather than the elimination of effort per se. Good writing needs to smell and touch and stumble its way into the world to find purchase in it. There is a feel to expression, as to luck and love (learning, too).

Still, the syntactical choreography that fills the text box on the screen in response to my prompting is arresting, reflecting if not embodying intelligence, broadly defined; relevant in terms of focus if not accuracy, strictly speaking. If the self-organizing alacrity of the cogent, sometimes clever sentences enchants more than enlightens, it is lit, I can clearly see, with the unquestioning glow of a dawn seeping ineluctably through drawn shades, a light that one morning soon may overwhelm the habitable shadows of the rooms in which I write, and dwell. How blinding will its light prove?

In a delightful essay, “The History of Punctuation,” a review of Pause and Effect, Dr. Malcolm Parkes’s overview of that rich subject, Nicholson Baker dubs the usage illustrated in my Austen quote the “semi-colash,” grouping it with other now extinct specimens that were of profound importance in nineteenth-century English prose (including the commash, employed by Henry James in the passage from The Portrait of a Lady I share). Baker’s essay is collected in his book The Size of Thoughts.

Technically, the em dash per se is an artifact of type rather than the hand, since its width in print corresponds to that of the letter “m,” as opposed to the en dash, which occupies the space, in a printed line, of an “n.” I won’t go into the different uses of em dash versus en dash versus hyphen, much as distinctions between them scratch an itch of mine that is never entirely assuaged. Even though it is nominally rooted in typographic lore, the idea of the dash—whatever its precise length—flows from mind to hand, and vice versa, along a congenial current.

From the poet’s “Snow”: “World is crazier and more of it than we think / Incorrigibly plural.”

The concept and coinage of “enshittification” belong to Cory Doctorow, as detailed in his recent book of that title.

I am speaking here about the preponderant current uses of ChatGPT generated prose. There are deeper mysteries surrounding the conversation between math and language that generative AI provokes, mysteries that will likely reveal new dimensions to the ways both words and numbers make meaning. Much of the “intelligence” unlocked by LLMs is inherent, I would suggest, in the language the machines mine, rather than an attribute of the machines per se. “Meaning happens to a sign—computationally in the case of LLMs; cognitively in the case of humans,” wrote Rob Nelson recently, channeling Charles Sanders Peirce and echoing Peirce’s friend William James’s assertion that truth happens to an idea. That reflects the way sentences work whatever their source; but meaning has different layers of resource, and if computation goes wider it doesn’t always go deeper, into the darkness of what has yet to be said.

Wulf’s translation is found in Magnificent Rebels: The First Romantics and the Invention of the Self, her wonderful collective portrait of the influential German philosophers and writers—Goethe, Schiller, Novalis, Fichte, Schelling, the Schlegels, among others—who gravitated to the university town of Jena at the end of the eighteenth century.

I am a similar wielder of the em dash, and was surprised when it somehow became a signifier of the Hestonian cold, dead hand of AI at work. Loved this piece and how - in a display of ontogeny recapitulates phylogeny, even though it appears that theory has been largely discredited - the individual em-dashery of your sentences is mirrored in the larger m-dashery of the piece itself. The em dash affords an essential permission to divagate - no self-respecting essay can be written without it - but at the same time, or a different time, or any time - writing that is as "worried" but as wiggle-free as Hemingway's sentences, would be syntactically allergic to its plummy, emmy presence. The em dash allows writer's thought process to come through, like a sketch underneath a painting, and there is no one such process I would rather limn than yours.

What a masterful work this article is. Many thanks for your thoughtful picture of the state of the written word.

Loved it,

Bravo